NVIDIA CEO Jensen Huang called ChatGPT the “iPhone moment of AI”.

The product showed the world what AI can do. Suddenly, like this Sundar Pichai meme — AI is all anyone in tech can talk about.

@verge Pretty sure Google is focusing on AI at this year’s I/O. #google #googleio #ai #tech #technews #techtok

♬ original sound – The Verge

Meanwhile, NVIDIA sells the essential tools for AI development, like picks and shovels in a gold rush.

That’s why its stock soared 200% this year, making it one of the few companies worth over $1 trillion.

Today, NVIDIA ranks as the sixth-largest company in the world.

This is not a lucky break. For more than a decade, NVIDIA has built the hardware and infrastructure to power apps like ChatGPT. Such large language models have billions to trillions of parameters, requiring enormous computation.

Jensen Huang saw the huge potential of AI and bet the company to develop NVIDIA’s capabilities across multiple industries.

NVIDIA has become the go-to for AI hardware and software. In this article, we’ll explain NVIDIA’s meteoric rise.

This article was written by a Financial Horse Contributor.

What Does NVIDIA Do?

NVIDIA popularised the graphics processing unit (GPU) and sells specialised chips that help to solve challenging computational problems. The company started by providing 3D graphics for games and now serves almost every industry, from architecture, media, automative, scientific research and manufacturing design.

NVIDIA’s business today is much more complex. It still makes money from hardware but can increase margins and revenue by combining that with a platform that lets many developers use the same technology for diverse use cases.

A Brief History of NVIDIA’s CUDA, the Platform Powering the AI-Boom

Let’s see how NVIDIA transformed from computer graphics to AI by looking at its history.

It’s a fascinating story. For a deep dive, check out the two-part podcast by Acquired on iPhone moment of AI. We’ll look specifically at CUDA’s history, an important chapter to understand why NVIDIA dominates the AI market today.

NVIDIA began by developing GPUs to improve image display for computers. GPUs are great at this task because they can process many small tasks simultaneously (such as millions of pixels on a screen). This is called parallelisation — a key term we’ll return to soon.

A CPU works differently. It runs processes serially — one by one on each of its cores. A CPU has fewer cores than a GPU, but each core is faster and more powerful.

Back then, people thought graphics were the only big use for parallelisation. But in 2006, Stanford University researchers found that GPUs could speed up math operations in a way that CPUs couldn’t.

Jensen envisioned NVIDIA transforming from a graphics card maker to an accelerated computing company. In his recent commencement speech, he described NVIDIA’s purpose as helping “the Einstein and Da Vinci of our time do their lives work.”

NVIDIA created CUDA in 2006, a closed-source platform and API for parallel computing. The tool makes GPUs programmable for uses beyond graphics by opening up their parallel processing capabilities.

NVIDIA poured money into CUDA, but it took years to find a market and a business case for their solution. Remember, NVIDIA’s main market was gamers. They didn’t need this capability. NVIDIA spent millions and years persuading developers and growing their install base.

The breakthrough came when a Princeton Computer Science professor started an image-classifying project called ImageNet. In 2010, they launched an annual software contest where teams competed to create programs that could classify and detect objects correctly.

In the 2012 contest, a team submitted an algorithm that blew away the competition. It achieved a 15.3% error rate, 10.8% lower than the runner-up. The program, called AlexNet, was a convolutional neural network, which is a branch of artificial intelligence called deep learning.

This was a pivotal moment for AI and NVIDIA.

As Jensen puts it, the “GPU was at the center of the modern AI Big Bang, and really enabled deep learning to scale and to achieve its results.”

NVIDIA found its killer use for CUDA. Deep learning is the most important application that needs high throughput computation – thus creating a market for their GPUs. Data scientists and research scientists around the world realised they could create powerful deep neural networks without being technical experts.

Today, CUDA has 4 million developers, over 3,000 apps, and 40 million downloads. 15,000 startups and 40,000 large enterprises build on NVIDIA’s platform.

NVIDIA’s Contribution to AI Today

Here’s how NVIDIA described its role in AI in its Q1 FY24 investor presentation, which it created with its generative AI toolkit.

“NVIDIA has pioneered the art and science of visual computing and AI. NVIDIA GPUs—the heart of deep learning—are central to AI. They power self-driving cars, intelligent machines and robots, and extraordinary scientific discovery. NVIDIA is building the future of AI and the GPU is at its core.”

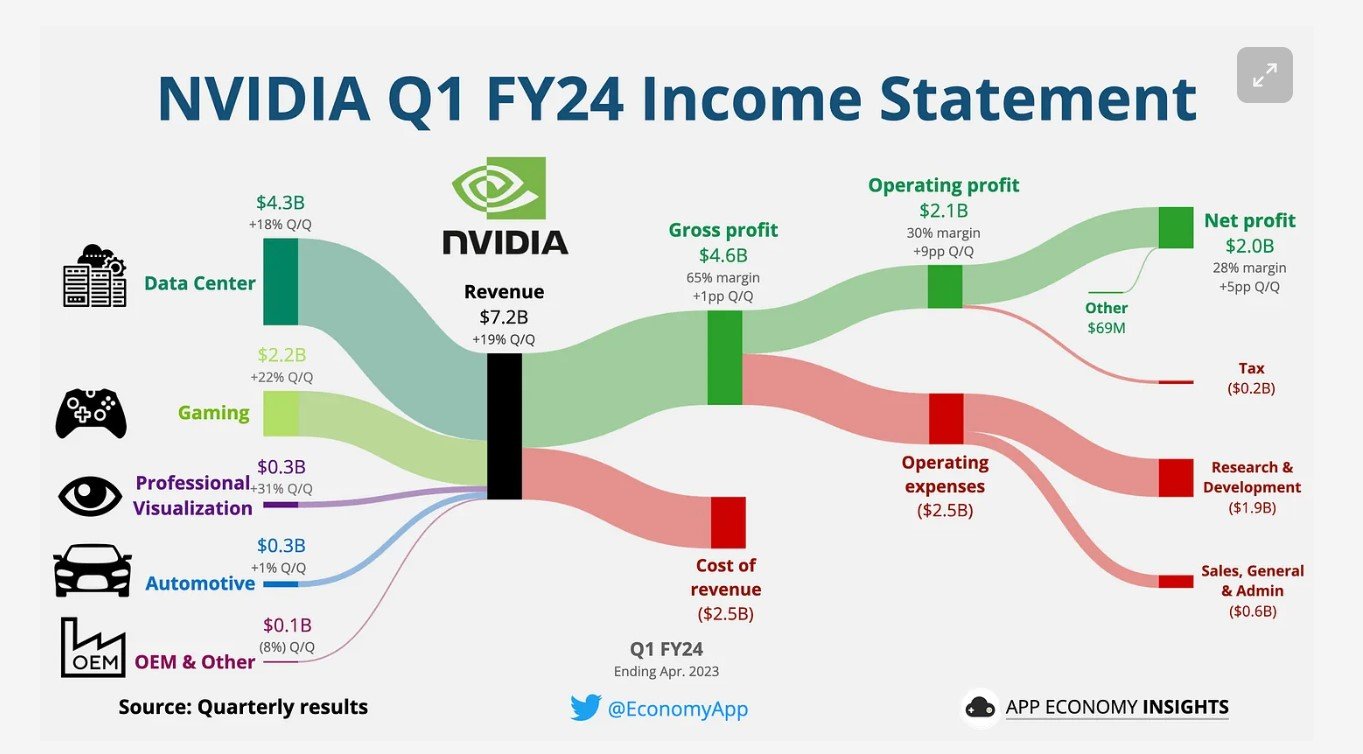

NVIDIA’s platforms and business model address four large markets: Gaming, Data Centers, Professional Visualization, and Automotive.

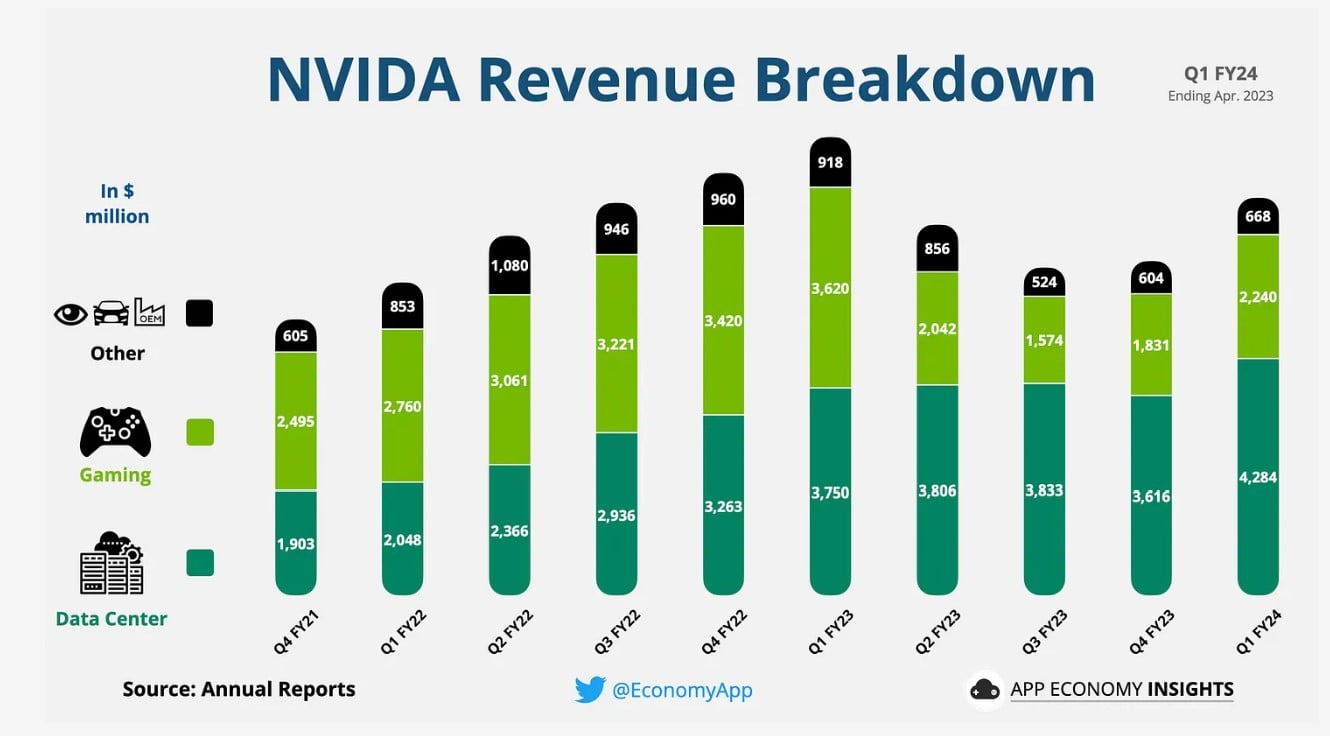

App Economy Insights has a great visualisation of how each segment contributes to NVIDIA’s total revenue.

NVIDIA’s Data Center business has exploded in recent years. It’s comparatively new to the Gaming business but already makes up 60% of overall revenue in Q1 2023. ChatGPT unleashed a tidal wave of demand for NVIDIA.

According to Jensen, this urgency is expressed in two ways.

The first is training. Cloud Service Providers like AWS and Microsoft Azure are building data centres around AI workloads. Generative AI startups like OpenAI also use NVIDIA’s chips to speed up product launches or reduce training costs over time.

The second is the widespread adoption of AI. ChatGPT currently has over 100 million users. The rapid adoption of generative AI has blown up usage.

NVIDIA can barely keep up with the demand because both are happening simultaneously.

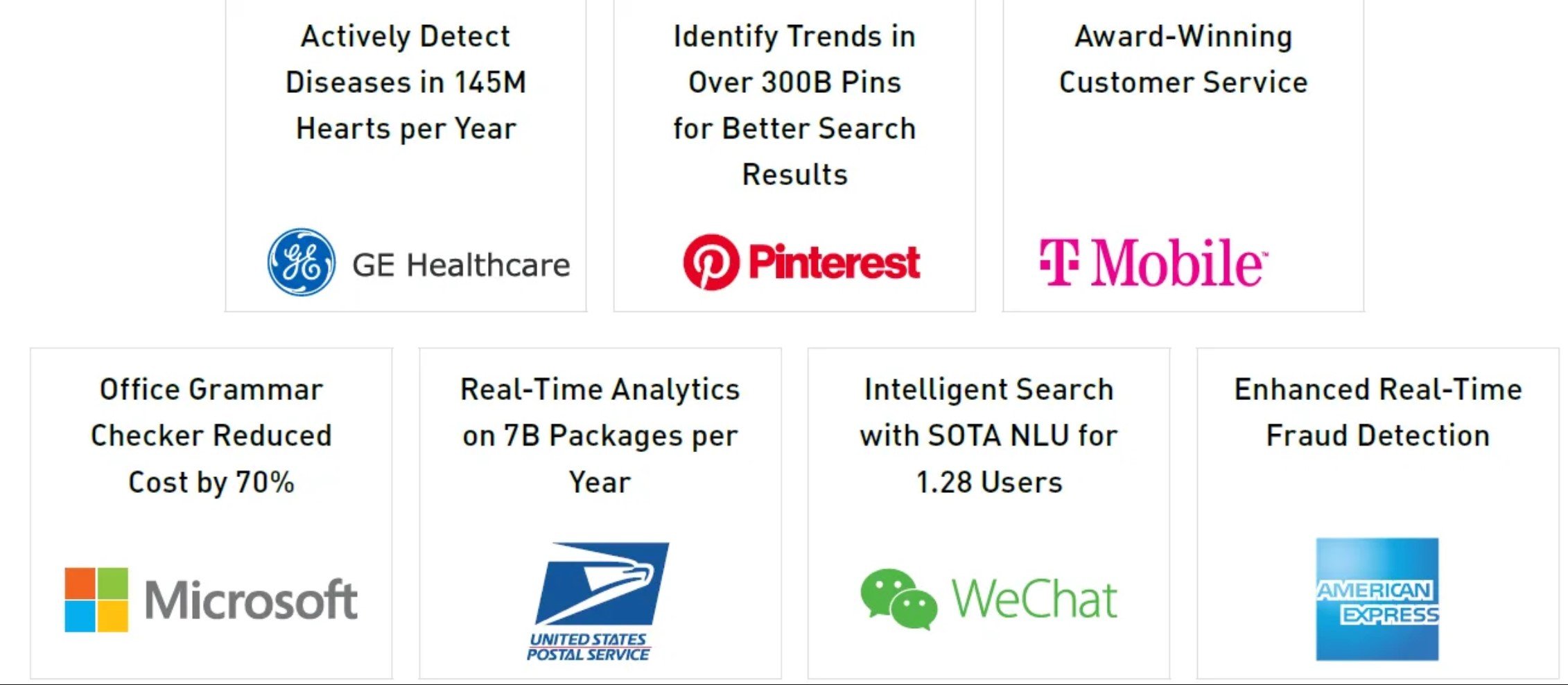

Consumer application is the most visible use case. The same thing is happening across diverse industries as companies realise they must adopt AI to stay relevant. For example, NVIDIA’s AI platform is being used in predictive healthcare, online product and content recommendations, voice-based search, contact centre automation, fraud detection, and others.

How NVIDIA’s products power the Generative AI Boom

NVIDIA has invested in AI for decades. Now it is enjoying the rewards. It keeps launching new AI products at a fast pace.

The generative AI boom fuels NVIDIA’s Data Centre segment. CFO Colette Kress said in their Q1 2023 Earnings Call:

“Generative AI is driving exponential growth in compute requirements and a fast transition to NVIDIA accelerated computing, which is the most versatile, most energy-efficient, and the lowest TCO approach to train and deploy AI. Generative AI drove significant upside in demand for our products, creating opportunities and broad-based global growth across our markets.”

She mentioned three major customer categories:

- Cloud service providers (CSPs): All the big players (Amazon, Microsoft, Google) use NVIDIA products. They are gobbling up the H100 data centre GPU, one of the most powerful processors. Jensen, NVIDIA’s CEO, called it “the world’s first computer [chip] for generative AI”.

- Consumer Internet companies: For example, Meta powers its Grand Teton AI supercomputer with H100 chips.

- Enterprise: AI and accelerated computing touch all sectors. For example, Bloomberg launched a product for financial Natural Language Processing. It helps with sentiment analysis, entity recognition, news classification, and question answering.

NVIDIA’s AI future

The AI revolution needs hardware and infrastructure. This will benefit NVIDIA with more demand for AI-optimised computers.

NVIDIA’s high valuation is debatable — at least according to Aswath Damodoran. But from a business perspective, NVIDIA has a strong edge.

Jensen said in an interview with Ben Thompson of Stratetchery:

“We’ve been advancing CUDA and the ecosystem for 15 years and counting. We optimize across the full stack iterating between GPU, acceleration libraries, systems, and applications continuously all while expanding the reach of our platform by adding new application domains that we accelerate. We start with amazing chips, but for each field of science, industry, and application, we create a full stack. We have over 150 SDKs that serve industries from gaming and design, to life and earth sciences, quantum computing, AI, cybersecurity, 5G, and robotics”

NVIDIA’s edge is like Apple’s. It comes from owning a powerful platform ecosystem for developers. This walled garden ecosystem makes it hard to switch platforms. And this switching cost lets NVIDIA sell their hardware for high margins.

Conclusion

We are in the early days of the AI boom, where NVIDIA has a hefty market share. It is a small market, but one that is likely to grow exponentially over the next few years.

According to the Bank of America, the total addressable market for NVIDIA’s AI-optimised chips could grow to $60 billion by 2027, where NVIDIA has about 75% market share.

NVIDIA has built a strong platform ecosystem that attracts customers from various sectors and domains. It has also launched innovative products that enable generative AI, a fast-growing and demanding field.

NVIDIA’s business is thriving, but its valuation is high. Investors should weigh the risks and rewards of betting on NVIDIA’s AI future.